Abstract

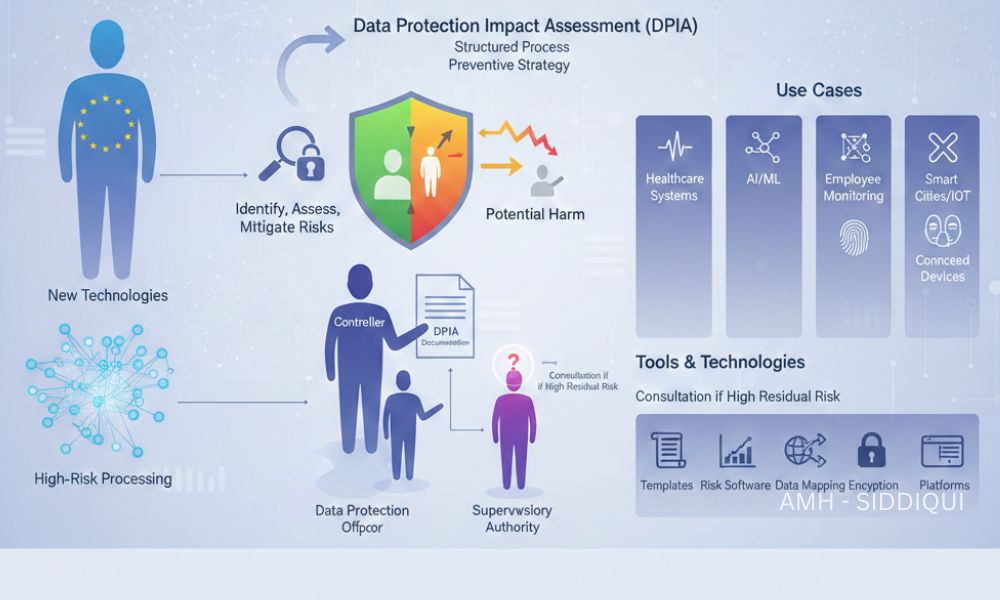

EU GDPR Article 35 introduces the concept of the Data Protection Impact Assessment (DPIA), a proactive compliance mechanism designed to identify, assess, and mitigate risks to individuals’ rights and freedoms arising from data processing activities. Particularly relevant when organizations adopt new technologies or engage in high-risk processing, Article 35 ensures that data protection is embedded into systems and processes from the very beginning. By mandating DPIAs in specific scenarios, the GDPR strengthens accountability, transparency, and responsible data governance across organizations.

Explanation

Article 35 requires controllers to conduct a Data Protection Impact Assessment whenever a type of processing is likely to result in a high risk to the rights and freedoms of natural persons. This obligation is especially important when processing involves innovative technologies, large-scale data collection, systematic monitoring, or sensitive personal data.

A DPIA is not merely a formality, it is a structured process that evaluates how personal data is collected, used, stored, and protected. The assessment helps organizations anticipate privacy risks before they materialize and take appropriate safeguards to minimize harm.

The regulation emphasizes a risk-based approach, meaning not all processing activities require a DPIA. However, when risks are elevated, the controller must assess factors such as the nature, scope, context, and purposes of processing. If the DPIA indicates that risks remain high despite mitigation efforts, the controller is required to consult the supervisory authority before proceeding.

Key Points

- A DPIA is mandatory when processing is likely to result in high risk to individuals’ rights and freedoms

- Particularly applies to new technologies and innovative processing methods

- Focuses on preventing harm rather than reacting to breaches

- Requires assessment of necessity, proportionality, and risks

- Encourages privacy by design and by default

- May require consultation with the supervisory authority if residual risks remain high

- Must be documented and kept up to date

General Activation Steps

To comply with Article 35, organizations typically follow these structured steps:

- Identify the Need for a DPIA: Determine whether the processing activity meets high-risk criteria, such as large-scale profiling or handling sensitive data.

- Describe the Processing Activity: Clearly outline what data is collected, how it is processed, who has access, and how long it is retained.

- Assess Necessity and Proportionality: Evaluate whether the processing is essential for its stated purpose and whether less intrusive alternatives exist.

- Identify Risks to Individuals: Analyze potential impacts on privacy, data security, discrimination, identity theft, or loss of control over personal data.

- Define Risk Mitigation Measures: Implement technical and organizational safeguards such as encryption, access controls, anonymization, and staff training.

- Document Outcomes: Maintain written records of findings, decisions, and implemented measures.

- Consult Authorities (If Required): If high risks cannot be adequately mitigated, consult the relevant supervisory authority before processing begins.

Use Cases

Article 35 applies across multiple industries and processing scenarios, including:

- Healthcare Systems: Processing large volumes of sensitive medical records using digital platforms.

- Artificial Intelligence and Machine Learning: Automated decision-making systems that profile individuals or predict behavior.

- Employee Monitoring Tools: Tracking productivity, location, or online activity in the workplace.

- Biometric Identification: Facial recognition, fingerprint scanning, or voice authentication systems.

- Smart Cities and IoT Devices: Large-scale monitoring through connected devices and sensors.

- Financial Services: Credit scoring systems and fraud detection technologies involving profiling.

These use cases highlight how DPIAs help organizations balance innovation with fundamental privacy rights.

Dependencies

Effective implementation of Article 35 relies on several interconnected GDPR principles and roles:

- Article 5 – Data Protection Principles: Lawfulness, fairness, transparency, and data minimization guide DPIA evaluations.

- Article 24 – Responsibility of the Controller: Controllers are accountable for ensuring DPIAs are properly conducted.

- Article 25 – Data Protection by Design and by Default: DPIAs reinforce privacy integration at early stages of processing.

- Article 36 – Prior Consultation: Triggered when DPIAs identify unresolved high risks.

- Data Protection Officer (DPO): Where appointed, the DPO must be consulted during the DPIA process.

Tools and Technologies

Organizations often rely on a mix of tools and technologies to streamline DPIA processes:

- DPIA Templates and Frameworks: Standardized assessment models recommended by supervisory authorities.

- Risk Assessment Software: Tools that map data flows and identify vulnerabilities.

- Data Mapping and Inventory Tools: Provide visibility into how and where personal data is processed.

- Encryption and Security Technologies: Reduce risk exposure and strengthen compliance.

- Privacy Management Platforms: Centralize documentation, approvals, and audit trails.

- Automation and AI Governance Tools: Monitor algorithmic transparency and bias risks.

Let’s Wrap

EU GDPR Article 35 plays a critical role in shifting data protection from a reactive obligation to a preventive strategy. By requiring Data Protection Impact Assessments for high-risk processing activities, the GDPR empowers organizations to foresee risks, implement safeguards, and uphold individuals’ rights in an increasingly data-driven world.

DPIAs are more than compliance checklists, they are strategic instruments that promote trust, accountability, and responsible innovation. Organizations that integrate DPIAs into their operational culture not only meet regulatory expectations but also demonstrate a strong commitment to ethical data practices. In the long run, Article 35 supports sustainable digital growth while placing individual privacy at the heart of technological advancement.

For further reading:

- EU GDPR – Article 34 (Communication of a Personal Data Breach to the Data Subject)

- EU GDPR – Article 33 (Notification of a Personal Data Breach to the Supervisory Authority

- EU GDPR – Article 32 (Security of Processing)

- EU GDPR – Article 31 (Cooperation with the Supervisory Authority)

- EU GDPR – Article 30 (Records of Processing Activities)

- EU GDPR – Article 29 (Processing Under the Authority of the Controller or Processor)

- EU GDPR – Article 28 (Processor)

- EU GDPR – Article 27 (Representatives of Controllers or Processors Not Established in the Union)

- EU GDPR – Article 26 (Joint Controllers)

- EU GDPR – Article 25 (Data Protection by Design and by Default)

- EU GDPR – Article 24 (Responsibility of the Controller)

- EU GDPR – Article 23 (Restrictions on Data Subject Rights)

- EU GDPR – Article 22 (Automated Individual Decision-Making, Including Profiling)

- EU GDPR – Article 21 (Right to Object)

- EU GDPR – Article 20 (Right to Data Portability)

- EU GDPR – Article 19 (Notification Obligation Regarding Rectification or Erasure of Personal Data or Restriction of Processing)

- EU GDPR – Article 18 (Right to Restriction of Processing)