Data Masking means hiding sensitive data so that unauthorized people cannot see the real information, while the system can still work normally. In other words, you replace real sensitive data with fake or hidden data, but keep it usable for testing, analysis, or support.

In Big Data environments, organizations store National IDs, Passport numbers, Credit cards, Health records, Customer contact details etc., If this data is exposed, it can cause Identity theft, Financial fraud, Regulatory penalties, and Reputation damage. Data masking protects this information, especially in Testing, Development, Training and Analytics environments.

The diversity of data masking methods is a direct response to the complex landscape of modern information security. Because data types range from standard identifiers like names and emails to highly sensitive biometrics and medical records, a “one-size-fits-all” approach is fundamentally ineffective. Furthermore, the appropriate masking method is dictated by the specific objective, (whether for AI training, software testing, or third-party outsourcing) as well as the inherent risk level associated with the data’s destination. When coupled with the need to satisfy distinct, often stringent regulatory frameworks like GDPR, HIPAA, or PCI-DSS, it becomes clear that a multi-faceted toolkit of masking strategies is essential to balance utility with compliance.

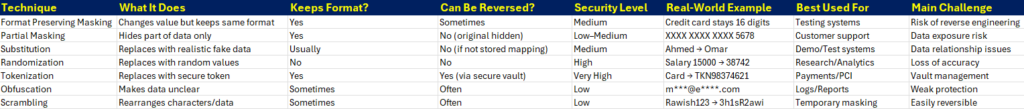

Data Masking Methods/Techniques

- FPM – Format Preserving Masking: Format Preserving Masking means changing the data but keeping the same structure and pattern. The data looks real and keeps the same length and format, so systems continue working without errors. For example, a credit card number like 4532 9876 1234 5678 may become 8271 4421 9988 3301. It is still 16 digits and still looks like a credit card number, but it is not the real one. Banks and airlines use this method in testing environments where developers need realistic data but must not use real customer information. The challenge is that if the masking method is weak, someone may reverse-engineer it.

- Partial Masking: Partial Masking hides only part of the data while showing some of it. This helps people recognize the information without seeing the full sensitive value. For example, a credit card number 4532 9876 1234 5678 may appear as XXXX XXXX XXXX 5678. An email like [email protected] may appear as mu@email.com. This is commonly used in customer service systems and dashboards. The risk is that if too much data is shown, attackers may combine it with other information to guess the full value.

- Substitution: Substitution replaces real data with different but realistic fake data. The replacement value looks natural and believable. For example, the real passenger name Ahmed Ali may be replaced with Omar Khan, or the city Jeddah may become Riyadh in a testing database. This method is useful in demo systems, training, and software testing. The advantage is that the data still looks real and meaningful. The challenge is that relationships between data fields can sometimes break if not handled carefully.

- Randomization: Randomization replaces real data with completely random values. The new data does not try to look similar to the original. For example, a salary of 15,000 SAR may become 38,742 SAR, and age 35 may become 62. This method is strong for privacy because the original value cannot be guessed. It is often used in analytics or when sharing datasets for research. However, it can damage data accuracy because patterns and relationships may disappear.

- Tokenization: Tokenization replaces sensitive data with a meaningless token. The real value is stored securely in a special system called a token vault. For example, a credit card number 4532 9876 1234 5678 may become TKN98374621. The token has no value by itself. Even if hackers steal it, they cannot use it without access to the vault. This method is widely used in payment systems, banking, healthcare, and airline booking systems. It provides strong security but requires secure vault management and infrastructure.

- Obfuscation: Obfuscation makes data unclear or harder to understand. It does not necessarily replace the data completely but hides it in a confusing way. For example, an email [email protected] may appear as m@e*.com, or GPS coordinates may be slightly changed to hide exact location. It is often used in reports, logs, or public documents. The weakness is that simple obfuscation can sometimes be reversed or guessed.

- Scrambling: Scrambling rearranges characters or data elements to make them unreadable. For example, a value like Shahid123 may become 3h1sR2dhai. In datasets, rows or columns might be shuffled randomly. Scrambling is simple and fast, often used for temporary masking in testing. However, it is usually weak because it can sometimes be reversed if the method is known.

For further reading:

- GDPR Aligned – Big Data Security Processes – Across the Data Lifecycle

- Six Essential Practices for Responsible AI Governance

- Zero-Knowledge Proof (ZKP) – A Professional Review

- Navigating the Big Data Lifecycle: From Collection to Insight

- EU GDPR – Article 13 (Information to Be Provided Where Personal Data Are Collected From the Data Subject)

- EU GDPR – Article 12 (Transparent Information, Communication, and Modalities for Exercising Data Subject Rights)

- EU GDPR – Article 11 (Processing Which Does Not Require Identification)

- EU GDPR – Article 10 (Processing Personal Data Related to Criminal Convictions and Offenses)

- EU GDPR – Article 9 (Processing Special Categories of Personal Data)

- EU GDPR – Article 8 (Conditions Applicable to Child’s Consent in Information Society Services)

- EU GDPR – Article 7 (Conditions for Consent)

- EU GDPR – Article 6 (Lawfulness of Processing)

- EU GDPR – Article 5 (Principles Relating to Processing of Personal Data)

- EU GDPR – Article 4 (Definitions)

- EU GDPR – Article 3 (Territorial Scope)

- EU GDPR – Article 2 (Material Scope)

- EU GDPR – Article 1 (Subject-matter and objectives)

- Why choose Virtual Learning?

- Benefits of Online Education

- Data Modeling – Identifiers / Keys

- Content Modeling – Controlled Vocabularies and Format

- Data Modeling – Arity of Relationships