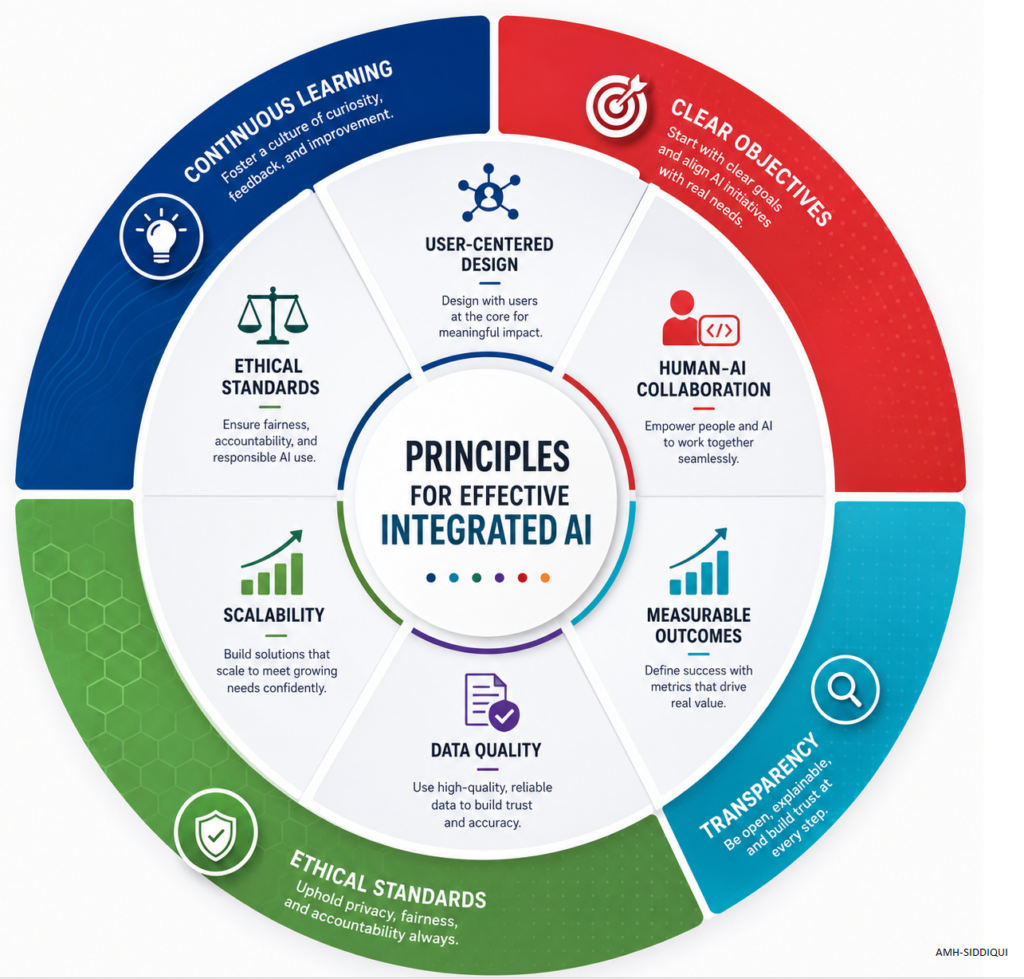

Principles are essential for Integrated AI because AI systems do not function in isolation. They interact with multiple systems, databases, business processes, users, regulatory requirements, and decision-making environments. Without well-defined principles, integrated AI environments can become inconsistent, insecure, biased, unsafe, difficult to govern, and challenging to maintain effectively. The following principles enable a robust and integrated AI framework:

- Clear Objectives: Integrated AI systems work best when their goals are clearly defined from the beginning. Organizations should know exactly what problem the AI is solving, what results are expected, and how success will be measured. Without clear objectives, AI projects often become confusing, expensive, and ineffective. For example, an airline wants to use AI for customer service. Instead of saying “improve customer experience” they define a clear objective scuh as “Reduce customer response time from 10 minutes to 2 minutes using AI chat support”. This specific target helps the team design, test, and evaluate the AI properly.

- Ethical Standards: AI must follow ethical principles to ensure fairness, privacy, accountability, and non-discrimination. Ethical AI avoids harming people, respects personal data, and treats users equally regardless of gender, nationality, or background. Organizations should establish policies and governance to monitor AI behavior and prevent misuse. Let’s say, a bank uses AI to approve loans. Ethical standards ensure the AI does not reject applicants unfairly because of age, ethnicity, or gender. The bank regularly audits the AI decisions to confirm fairness and compliance with regulations.

- Data Quality: AI systems depend heavily on data. Poor-quality, incomplete, outdated, or inaccurate data can produce incorrect results and bad decisions. High-quality data should be accurate, consistent, relevant, and properly managed. Data governance plays a critical role in maintaining trustworthy AI outputs. For instance, a hospital uses AI to predict patient risks. If patient records contain missing medical histories or incorrect diagnoses, the AI predictions may become dangerous. Clean and validated healthcare data improves the reliability of AI recommendations.

- Transparency: Transparency means users and stakeholders should understand how AI systems make decisions. People are more likely to trust AI when explanations are clear and understandable. Transparent AI also helps organizations identify errors, biases, and compliance issues more easily. For example, an HR department uses AI to shortlist job candidates. Instead of simply rejecting applicants automatically, the AI explains that a candidate was not shortlisted because required certifications or years of experience were missing. This builds trust and accountability.

- User-Centered Design: AI systems should be designed around the needs, abilities, and expectations of users. The technology should be simple, accessible, and helpful rather than complicated or frustrating. Understanding user behavior improves adoption and overall satisfaction. In the case of a retail company develops an AI-powered shopping assistant, instead of using technical language, the assistant communicates in simple everyday language and provides easy product recommendations based on customer preferences.

- Human-AI Collaboration: AI should support humans rather than completely replace them. The best results often come when humans and AI work together, combining machine speed and data analysis with human judgment, creativity, and emotional understanding. In cybersecurity, AI quickly detects suspicious activities across millions of network logs, while human analysts investigate and decide whether the threat is real. The AI increases efficiency, and the humans provide critical thinking and final judgment.

- Continuous Learning: AI systems should continuously improve through feedback, monitoring, and updated data. Business environments, customer behaviors, and regulations change over time, so AI models must adapt to remain effective and accurate. An e-commerce website uses AI to recommend products. During holiday seasons, customer buying patterns change significantly. The AI continuously learns from new purchases and updates its recommendations to match current trends.

- Scalability: An effective integrated AI solution should be able to grow with the organization. Scalability means the AI can handle increasing amounts of users, data, and processes without major performance problems or complete redesign. For example, a startup initially uses AI to process 1,000 customer requests per day. As the business grows internationally, the AI system scales to manage 100,000 requests daily while maintaining fast response times.

- Measurable Outcomes: AI initiatives should produce measurable business value. Organizations need clear performance indicators to evaluate whether the AI is delivering expected benefits such as cost reduction, improved efficiency, increased revenue, or better customer satisfaction. For instance, a logistics company introduces AI for route optimization. After AI implementation, the company measures outcomes such as reduced fuel costs, faster delivery times, and improved customer ratings to confirm the AI project’s success.

For your further study:

- What is Integrated AI (Artificial Intelligence)?

- GDPR Aligned – Big Data Security Processes – Across the Data Lifecycle

- Six Essential Practices for Responsible AI Governance

- Zero-Knowledge Proof (ZKP) – A Professional Review

- Navigating the Big Data Lifecycle: From Collection to Insight

- Data Modeling – Identifiers / Keys

- Content Modeling – Controlled Vocabularies and Format

- Data Modeling – Arity of Relationships